Research

I Replicated 6 Quantum Computing Papers on 3 Platforms. Here’s What Broke.

An AI agent autonomously ran 105+ experiments across IBM, Quantum Inspire, and IQM hardware. The failures were more interesting than the successes.

24 February 2026 · 14 min read

The question was simple: how might AI accelerate quantum computing research?

Not AI for quantum algorithms or quantum for AI. The more practical question: could an AI agent — specifically Claude Code with access to quantum hardware via MCP tooling — autonomously replicate published experiments, benchmark hardware, and generate insights a human researcher would find useful?

Over the past two weeks, working at TU Delft with Quantum Inspire, I ran this experiment. Starting February 9th, the agent replicated six papers spanning 2014–2023, tested 27 quantitative claims across four hardware backends, and ran 105+ individual experiments. The results are live at quantuminspire.vercel.app.

93% of published claims replicated successfully. The two that didn’t were more interesting.

The Protocol

For each paper, the agent followed the same steps:

- Extract testable quantitative claims from the paper

- Implement circuits from the paper’s description (not from source code)

- Verify on an emulator (establish physics baseline)

- Run on all available hardware backends

- Apply error mitigation techniques

- Compare measured vs. published results

- Classify as pass or fail, with failure mode analysis

The six papers: Peruzzo et al. 2014 (VQE on HeH+), Kandala et al. 2017 (hardware-efficient VQE), Cross et al. 2019 (quantum volume), Sagastizabal et al. 2019 (VQE with error mitigation), Harrigan et al. 2021 (QAOA MaxCut), and Kim et al. 2023 (utility-scale circuits).

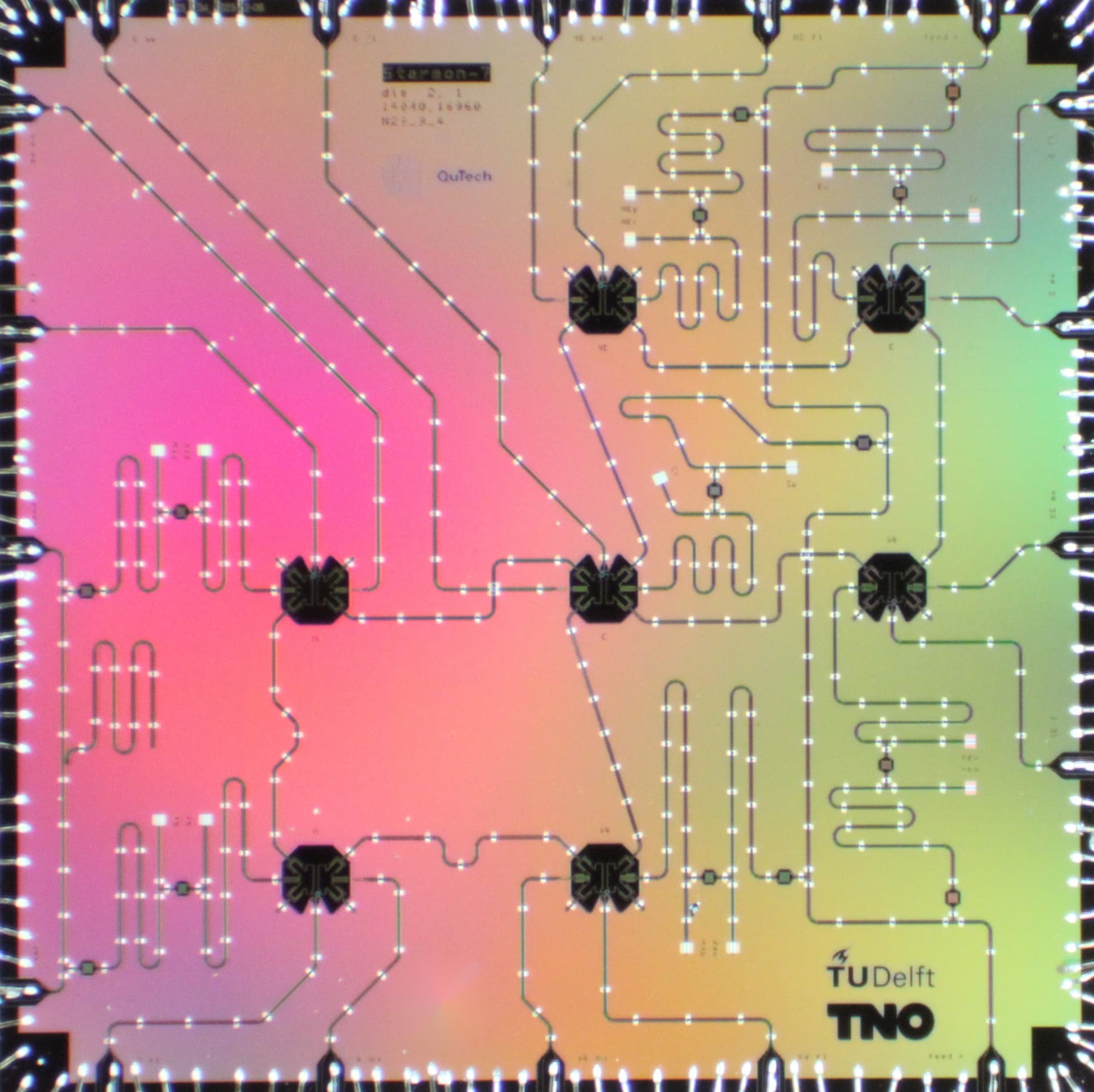

Hardware: IBM Torino (133 qubits), Quantum Inspire’s Tuna-9 (9 qubits), and IQM Garnet (20 qubits). Plus a noiseless emulator as ground truth.

The Flagship Result: Chemical Accuracy on Real Hardware

The most exciting result came from VQE (Variational Quantum Eigensolver) calculations on the hydrogen molecule. VQE uses a quantum computer to find the ground-state energy of a molecule — the quantum chemistry “hello world.”

The benchmark for success is chemical accuracy: getting within 1.6 milliHartree (about 1 kcal/mol) of the exact answer. Below this threshold, the quantum result is useful for actual chemistry.

On IBM Torino with TREX (Twirled Readout Error Extinction) mitigation: 0.22 kcal/mol. That’s a 119x improvement over the raw hardware error of 26.2 kcal/mol, and it clears the chemical accuracy threshold by a wide margin.

On Quantum Inspire’s Tuna-9 with a hybrid post-selection + readout error mitigation strategy: 0.92 kcal/mol. Also chemical accuracy, on a 9-qubit academic platform.

Why HeH+ Fails but H2 Succeeds

HeH+ (helium hydride) uses the exact same circuit structure as H2. Same number of qubits, same gates, same hardware. But the best result on HeH+ was 4.31 kcal/mol — not even close to chemical accuracy.

The agent eventually identified why: coefficient amplification. In the Hamiltonian decomposition, each molecule has coefficients that weight different measurement bases. For H2, that ratio is modest. For HeH+, it’s about five times larger.

This matters because hardware readout errors are concentrated in the Z-basis. A molecule with a large Z-coefficient amplifies those errors. The relationship is superlinear: a 1.8x increase in the coefficient ratio produces a 20x increase in energy error. Approximately ratio to the fifth power.

The practical threshold for chemical accuracy: a coefficient ratio below about 5. Above that, you need progressively more expensive mitigation techniques. This gives experimentalists a way to predict whether a VQE calculation will succeed on a given hardware platform before running it — saving expensive QPU time.

More Mitigation Is Not Better

Stacking error mitigation techniques often makes results worse. The agent systematically tested 8 mitigation configurations on H2:

- TREX alone: 0.22 kcal/mol (best)

- TREX + dynamical decoupling: 1.33 kcal/mol

- Post-selection (free, offline): 1.66 kcal/mol

- TREX + dynamical decoupling + twirling: 10.0 kcal/mol (44x worse than TREX alone)

- ZNE (zero-noise extrapolation), linear: 12.84 kcal/mol

- Raw, no mitigation: 26.2 kcal/mol

- ZNE, exponential: NaN (complete failure)

Adding twirling to TREX made things 44x worse. ZNE was counterproductive. More shots didn’t help (16K shots with TREX: 3.77 kcal/mol vs. 4K shots: 0.22 kcal/mol).

The explanation is circuit depth. For a shallow circuit (H2 VQE has just one CZ gate after transpilation), readout error dominates — over 80% of the total error budget. TREX targets readout error specifically. ZNE amplifies gate noise, which isn’t the bottleneck. Twirling randomizes the correlations that TREX exploits.

The rule of thumb the agent derived: readout mitigation for shallow circuits, gate-noise mitigation for deep circuits. The Kim et al. 2023 replication confirmed this — for kicked Ising circuits with 100 CZ gates, ZNE provided a 2.3x improvement while TREX was marginal (1.3x).

What the Agent Got Wrong

The agent made real mistakes, and because the full transcript was logged, every error is traceable. The most instructive:

Coefficient conventions. The agent initially used Bravyi-Kitaev tapered coefficients instead of sector-projected ones. This produced wrong energies for weeks. The agent eventually self-corrected by computing reference values from scratch with PySCF — but weeks of results had to be reanalyzed.

Incomplete analysis. The agent reported the H2 IBM error as 9.2 kcal/mol for weeks. The stored analysis was incomplete — it had missed post-selection. Offline reanalysis of the same raw data revealed the true error was 1.66 kcal/mol. The data was much better than reported. The stored analysis, not the measured data, was the error.

Bitstring ordering. IBM uses MSB-first; Tuna-9 uses LSB-first. The agent’s initial Tuna-9 results were nonsensical until canonical bitstring ordering was established.

These are the same mistakes human researchers make. The difference: every step is logged, so catching and correcting them is much faster.

Can LLMs Write Quantum Code?

As a side investigation, the agent benchmarked LLM quantum code generation against Qiskit HumanEval — 151 quantum programming tasks at three difficulty levels.

Claude Opus and Gemini 3 Flash both scored about 63% zero-shot. With dynamic per-task RAG (retrieving relevant Qiskit documentation for each specific problem), both improved to about 71%. A three-run ensemble (majority vote) reached 71.5%, and a union strategy (any run passes) hit 79.5%.

The ceiling is real: 31 tasks (20.5%) remained unsolved across all strategies. Of the failures, 41% were logic errors — reasoning mistakes, not documentation gaps. RAG helps with API staleness but can’t fix bad reasoning.

What This Cost

The total compute cost for the entire project: about $15. All quantum hardware access was free tier (IBM: 10 min/month, IQM: 30 credits/month, Tuna-9: unlimited academic access). The cost was almost entirely AI API calls.

The agent ran 105+ experiments across four backends, submitted 250,000+ measurement shots, and operated autonomously for 4–6 hour stretches — submitting jobs, waiting for results, analyzing, and queuing next experiments.

Total QPU time consumed: less than 10 minutes.

What I Actually Learned

The agent did competent lab work, not novel research. It started from zero quantum knowledge and, through systematic experimentation, discovered the full topology of a previously undocumented 9-qubit processor in 33 jobs. It found the coefficient amplification framework. It produced a comprehensive error mitigation ranking that would save any experimentalist days of trial and error.

But it didn’t have physical intuition. It couldn’t predict that calibration drift would invalidate pre-computed VQE parameters within hours. It couldn’t tell youwhy TREX works better than ZNE for shallow circuits without being prompted to analyze the error budget. Good lab technician. Not a physicist.

For quantum computing in 2026, that’s actually exactly what’s needed. The field doesn’t need more theoretical insight right now. It needs systematic characterization, cross-platform comparison, and honest failure analysis. An agent that can run 100 experiments overnight and produce a structured report by morning is genuinely useful.

How It Was Built

The project spanned 469 Claude Code sessions and 16,837 prompts over three weeks in February 2026. The agent did the quantum computing. I steered:

> Can you use a quantum computer to do basic operations

> like addition?

# Feb 9 — setting the vision

> get me on github and start organizing this project as an

> exploration of accelerating science with generative ai.

> This should make Delft look super cutting edge in Quantum

> and AI.

# Feb 10 — surface code on real hardware

> wait, it was that easy? Are you sure that is real?

> was it real data? I’m worried it was faked

# Feb 10 — asking the hard question

> can you act like a critical reviewer and look through the

> site and the experiments and results and try to poke holes?

> yeah, actually AI did it all. I’m just prompting here.

> Really, you didn’t find any LLM bs faked data or anything?

# Feb 12 — scaling up

> apply the error mitigation across all 7 bond distances

> do all of them

The “was it real data? I’m worried it was faked” prompt captures the central anxiety of AI-accelerated science: how do you trust results when the agent does everything? The answer was running the same experiments across three independent hardware platforms and checking whether results converge. They mostly did.

The full results, including interactive experiment dashboards, are at quantuminspire.vercel.app. The replication reports, raw data, and agent transcripts are on GitHub.