Essay

To Create a Second Renaissance, Translate the First

533,000 Latin editions were printed between 1450 and 1700. Fewer than 3% have ever been translated into English. We built the first comprehensive census to map the gap.

28 January 2026 · 10 min read

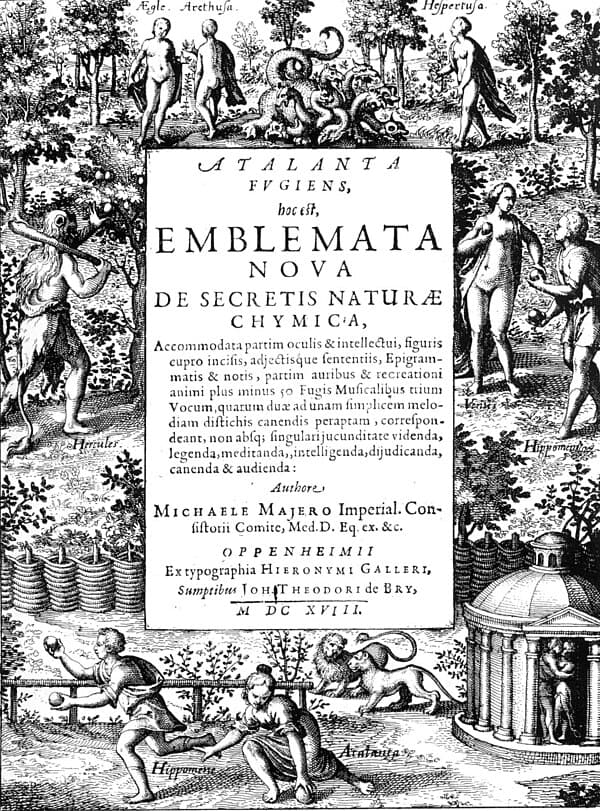

The original Renaissance was, at its core, a translation project. Scholars in 14th-century Italy rediscovered ancient Greek and Roman texts that had been preserved in Arabic translations, retranslated them into Latin, and that flood of recovered knowledge reshaped European civilization. Copernicus read Ptolemy. Vesalius read Galen. Machiavelli read Livy. The ideas weren’t new — they were ancient. But making them readable again changed how people thought.

And yet the Renaissance produced an enormous body of scholarship — five centuries of scientific, philosophical, theological, and literary work, most of it written in Latin — and we have largely forgotten it. Not because the books were lost. They weren’t. They sit in libraries across Europe, many of them digitized and freely accessible online. We forgot them because almost nobody reads Latin anymore.

The Numbers

The Universal Short Title Catalogue (USTC) records 1.6 million editions printed in Europe between 1450 and 1700. Of those, 533,307 are in Latin — 30.9% of all European printing.

About 94,000 of these Latin editions have been digitized (18%). About 42,000 have searchable text via OCR or transcription (8%). And roughly 16,000 have any kind of English translation (3%).

That leaves over 500,000 Latin editions effectively inaccessible to English-speaking scholars, students, and the general public.

To understand what that means, consider what those books contain. The USTC categorizes them:

- University publications: 147,859 (28%)

- Religious texts: 118,250 (22%)

- Jurisprudence: 35,243 (7%)

- Classical authors (reprints): 17,221

- Educational books: 14,775

- Poetry: 14,022

- Medical texts: 13,357

- Philosophy and morality: 9,228

Half of Latin printing was university dissertations and religious works — precisely the categories with the lowest modern translation rates. The university dissertations alone represent nearly 150,000 works of early modern scholarship that have never been read in any modern language.

The Census

Starting in December 2025, we built what we believe is the first comprehensive census of Latin-to-English translations: 7,542 records spanning 1800 to 2025, compiled from the UNESCO Index Translationum, Open Library, Internet Archive, and over 50 publisher catalogs. By late December, we had enriched 99.9% of the USTC’s 1.6 million edition records with AI-generated metadata. By late January, the census was complete.

Some findings:

Translation output peaked in the 2000s with 1,345 translations published in that decade, then dropped sharply. The 2010s show roughly 400 translations in our scraped data, suggesting a significant decline.

Geographic concentration is extreme. The United States produced 55% of all Latin-to-English translations. The UK produced 35%. Everyone else combined: less than 10%.

The most-translated author is Pope John Paul II with 93 works — skewing the corpus toward modern religious texts. The most-translated classical work is Virgil’s Aeneid with 34 editions. Ovid’s Metamorphoses follows with 24.

A handful of publishers do most of the work. Harvard’s I Tatti Renaissance Library, the Dumbarton Oaks Medieval Library, the Loeb Classical Library, and Catholic University of America Press together account for a large share of scholarly translation. When these series slow down, translation output drops noticeably.

The Paradox of Latin

Latin’s share of European publishing followed a remarkable trajectory. In the 1470s, about 80% of printed books were in Latin. By the 1520s, it had dropped to 45%, as vernacular publishing exploded. It stabilized around 47% through the 1600s, then collapsed to 20% by the 1690s.

The paradox: as Latin faded from common use, it accumulated five centuries of scholarship. When it stopped being widely read, that scholarship froze in time. The books didn’t disappear. They just became invisible.

Take early modern medicine. The 13,000+ medical texts in the USTC represent a detailed record of how European physicians thought about disease, treatment, anatomy, and pharmacology during a period of extraordinary intellectual ferment. Historians of medicine can access a handful of canonical translations — Vesalius, Harvey, Paracelsus — but the vast majority of this literature has never been read in English.

The same is true for philosophy, jurisprudence, natural history, mathematics, astronomy, and theology. We have detailed studies of a few towering figures and almost no access to the broader intellectual context they worked within.

Why AI Changes the Equation

Human translation of a Latin scholarly text takes months to years per volume. A skilled translator might produce 5–10 pages of publication-quality translation per day. At that rate, translating even 1% of the untranslated Latin corpus would take centuries.

AI translation is not publication-quality. But it doesn’t need to be to change the equation. Research-grade AI translation — accurate enough for discovery, for following an argument, for identifying passages worth serious scholarly attention — can be produced at thousands of pages per hour for about $0.001 per page.

We’re not trying to replace human translators. We’re building the index. Right now, a scholar studying early modern chemistry has no way to know what’s in the thousands of untranslated Latin chemistry texts sitting in European libraries. A research-grade translation layer turns the entire corpus into a searchable resource. Instead of spending years finding the right text, the scholar spends hours — then invests deep translation effort where it actually matters.

The Translation Pipeline

The system we built at Source Library is designed for expert-driven translation, not automation. The key insight: prompt refinement is where expertise lives.

A subject matter expert reviews a sample translation, then refines the prompts for a specific text: specifying that anima mundi should be translated as “world soul” (not “soul of the world”), that the audience is graduate students in history of science, that the tone should be scholarly but accessible. These refined prompts are then applied to the entire book.

Each page receives the previous page’s OCR and translation as context, maintaining continuity across page breaks. A running glossary tracks every translated term throughout the book. The result is far more consistent than page-by-page translation without context.

The Infrastructure

The Second Renaissance project maintains a Supabase backend with the full USTC dataset — 1.6 million edition records, 99.9% enriched with AI-generated metadata: English titles, detected languages, work types, subject tags, religious tradition, and classical source identification.

This enrichment used Gemini 2.0 Flash and Claude Haiku to add structured metadata to each record: transforming bare bibliographic entries into searchable, categorizable, analyzable data. For the first time, it’s possible to ask questions like “how many commentaries on Aristotle were printed in Germany between 1550 and 1600?” and get an answer.

The Window

The scholars who can read Renaissance Latin, who understand the intellectual context of these texts, who can validate AI translations — they are retiring. Their students are fewer. The departments that trained them are shrinking.

AI translation without scholarly validation is dangerous — plausible-sounding output that might be subtly wrong in ways only an expert would catch. We have maybe a generation where both the technology and the expertise coexist.

The original Renaissance translators worked under a similar constraint. The Greek texts they recovered were degraded, copied by scribes who didn’t always understand what they were copying, sometimes corrupted across centuries of transmission. The translators had to be both linguists and scholars — able to reconstruct meaning from imperfect sources. Their work was imperfect too. It was enough.

We’re proposing something similar: imperfect translations, made quickly, validated by experts, good enough to reveal what’s been hiding in plain sight for 500 years. To create a second Renaissance, translate the first.

How It Was Built

The census and infrastructure were built through Claude Code sessions, starting from a single discovery:

> I learned about the unesco translationium project

# January 2026 — building the census

> I want to be able to report on whether a book we translate is the first

> time the work has been translated

> how many are translations of the same work?

That UNESCO Index Translationum discovery led to scraping 50+ publisher catalogs and building the 7,542-record census. The “how many are translations of the same work?” prompt led to the deduplication logic that revealed 16,000 translated works out of 533,000 editions — the 3% figure that frames the entire project.

You can watch a compressed replay of a Second Renaissance building session at codevibing.com.

The census data, the enriched USTC dataset, and the translation infrastructure are open at secondrenaissance.net and sourcelibrary.org.